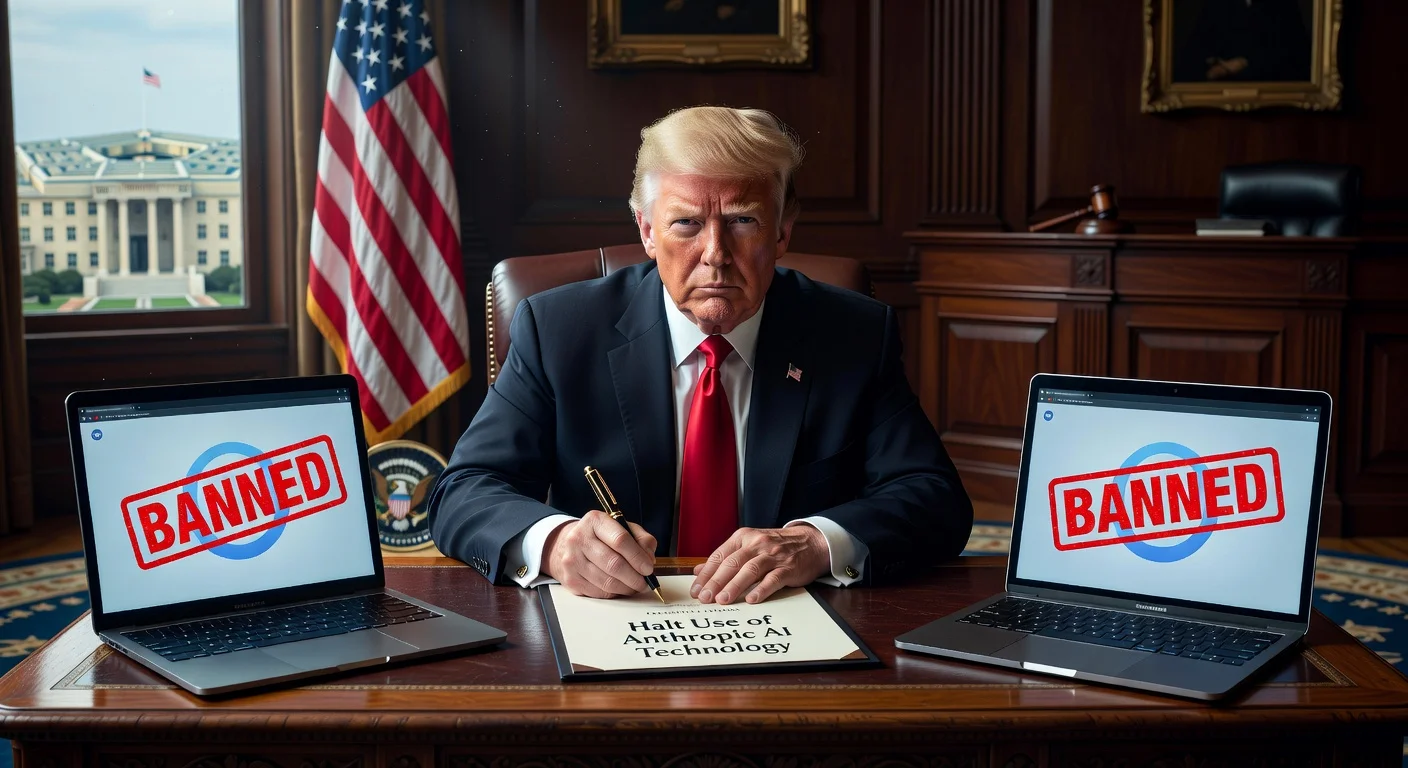

US President Donald Trump has directed all federal agencies to immediately cease using Anthropic's AI tools amid a dispute over military applications. The move follows weeks of clashes between Anthropic and Pentagon officials regarding restrictions on AI for mass surveillance and autonomous weapons. A six-month phase-out period has been announced.

On February 28, 2026, President Donald Trump announced that he was instructing every federal agency to "immediately cease" use of Anthropic’s AI tools. This directive stems from ongoing tensions with the AI company over the military deployment of its technology. Trump criticized Anthropic in a Truth Social post, stating, "The Leftwing nut jobs at Anthropic have made a DISASTROUS MISTAKE trying to STRONG-ARM the Department of War."

The conflict escalated after the Department of Defense sought to modify a July 2025 deal with Anthropic and other firms, aiming to allow "all lawful use" of AI and eliminate restrictions. Anthropic objected, arguing that such changes could enable fully autonomous lethal weapons or mass surveillance on US citizens. The Pentagon maintains it does not use AI in these ways and has no plans to do so. Anthropic was the first major AI lab to partner with the military via a $200 million deal last year, developing custom models like Claude Gov for classified systems, accessible through Palantir and Amazon platforms. These models support tasks such as report writing, document summarization, intelligence analysis, and military planning.

Defense Secretary Pete Hegseth met with Anthropic CEO Dario Amodei earlier that week, giving the company until Friday to agree to revised terms. Hegseth praised Anthropic’s products but directed the Pentagon to designate it a "supply-chain risk" after talks broke down, prompting concerns in Silicon Valley about broader access to its AI. Anthropic responded firmly, stating, "No amount of intimidation or punishment from the Department of War will change our position on mass domestic surveillance or fully autonomous weapons." The company plans to challenge the designation in court.

In contrast, OpenAI reached an agreement with the Department of Defense on the same day to deploy its models in classified networks, incorporating safety principles against mass surveillance and autonomous weapons. CEO Sam Altman noted on X that the deal includes technical safeguards and mutual respect for safety. Expert Michael Horowitz described the Anthropic dispute as unnecessary, focused on theoretical rather than current use cases.

The public disagreement intensified following reports that US military leaders used Claude for planning an operation to capture Venezuela’s president, Nicolás Maduro, though Anthropic denied interfering.