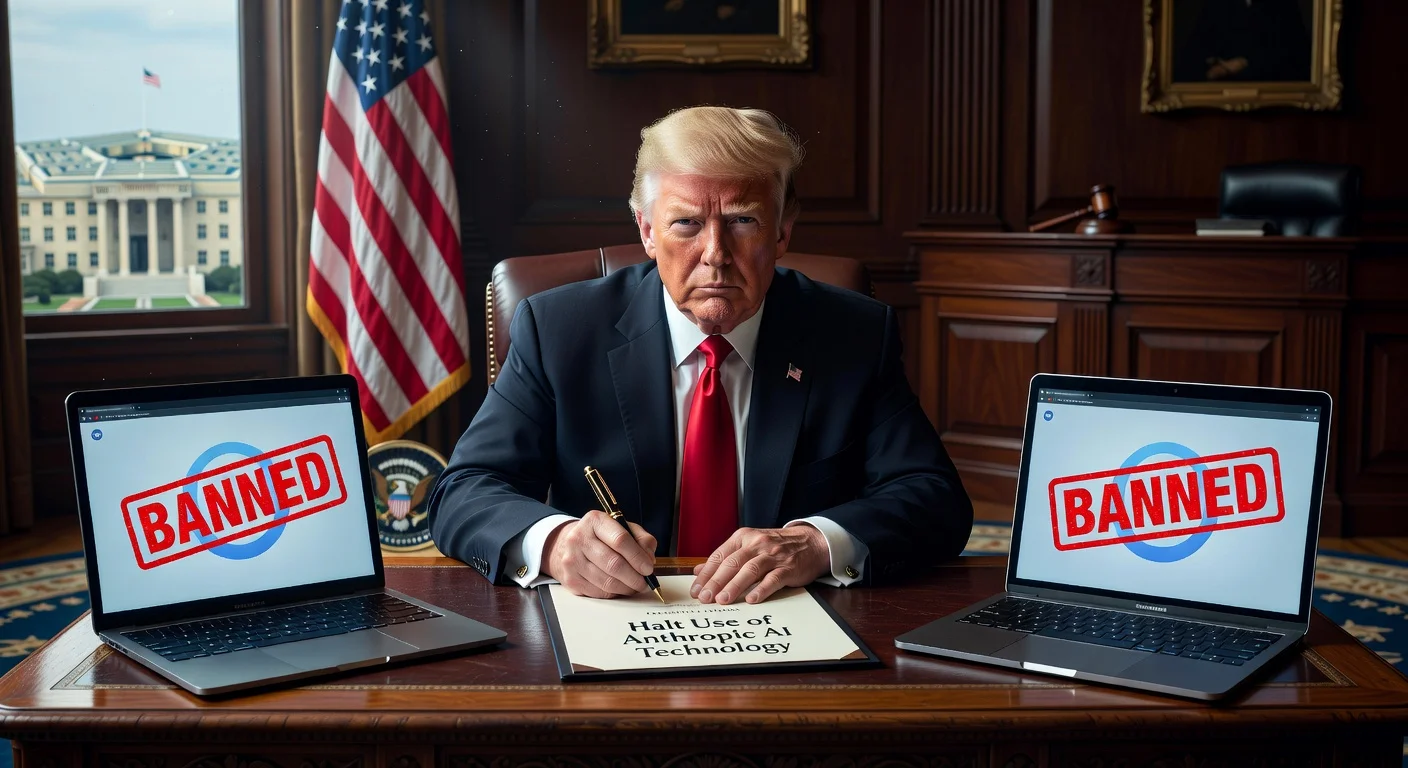

US President Donald Trump has directed federal agencies to immediately cease using Anthropic's AI technology. The order follows a dispute with the Pentagon, where the company refused unconditional military use of its Claude models. Anthropic has vowed to challenge the Pentagon's ban in court.

On February 27, 2026, US President Donald Trump posted on Truth Social directing every federal agency to 'immediately' cease all use of Anthropic's technology. He stated, 'We don't need it, we don't want it, and will not do business with them again!' Trump also announced a six-month phase-out period for agencies like the Department of Defense.

The dispute arose when the Pentagon demanded unconditional military use of Anthropic's Claude models. The company insisted its technology should not be used for mass surveillance of US citizens or fully autonomous weapons systems. The Pentagon maintains it operates within the law and that suppliers cannot dictate terms of product employment.

The Pentagon set a deadline of 5:01 p.m. on February 27 for compliance, threatening to invoke the Defense Production Act, a Cold War-era law granting broad powers to direct private industry for national security. Additionally, Defense Secretary Pete Hegseth ordered the designation of Anthropic as a 'supply chain risk,' barring military contractors from any commercial activity with the company. Hegseth wrote on X, 'Anthropic delivered a master class in arrogance and betrayal.'

Anthropic responded in a statement, 'No amount of intimidation or punishment from the Department of War will change our position... We will challenge any supply chain risk designation in court.' Support came from the industry, with hundreds of employees from Google, DeepMind, and OpenAI signing an open letter titled 'We Will Not Be Divided,' urging refusal of demands for surveillance and autonomous killing. OpenAI CEO Sam Altman wrote in a memo that his company seeks similar red lines against mass surveillance and autonomous lethal weapons.

Democratic Senator Mark Warner criticized the move, stating it raises concerns about national security decisions driven by political considerations. Alexandra Givens of the Center for Democracy and Technology called it a dangerous precedent that chills companies' ability to engage frankly with the government. The conflict echoes the 2018 Google-Pentagon clash, where employees protested AI use in drone footage analysis.