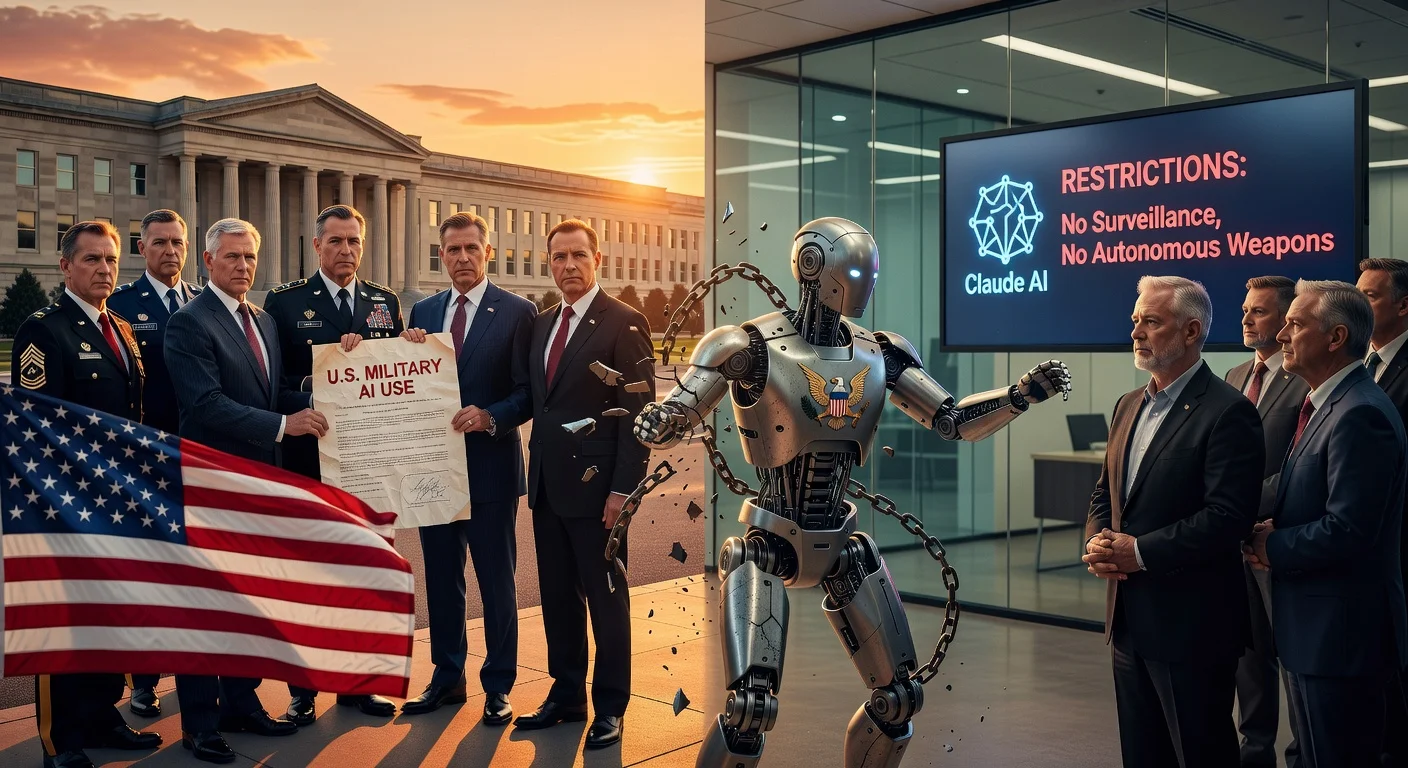

The Pentagon is considering ending its relationship with AI firm Anthropic due to disagreements over safeguards. Anthropic, the maker of the Claude AI model, has raised concerns about hard limits on fully autonomous weapons and mass domestic surveillance. This stems from the Pentagon's desire to apply AI models in warfighting scenarios, which Anthropic has declined.

The potential rift between the Pentagon and Anthropic highlights tensions in AI development for military purposes. According to reports, the U.S. Department of Defense seeks to integrate advanced AI models into warfighting applications. However, Anthropic has expressed firm reservations, emphasizing the need for strict boundaries around the use of fully autonomous weapons and extensive domestic surveillance programs.

Anthropic's position underscores its commitment to ethical AI deployment, refusing to support military applications that could cross these lines. The company, known for its Claude language model, prioritizes safety measures that the Pentagon's plans appear to challenge. No official confirmation of the severance has been issued, but the disagreement points to broader debates on AI governance in defense contexts.

This development, reported on February 16, 2026, reflects ongoing scrutiny of how AI technologies are regulated in sensitive sectors.