Following last week's federal ban on its AI tools, Anthropic has resumed negotiations with the US Defense Department to avert a supply chain risk designation. Meanwhile, OpenAI's parallel military agreement is under fire from employees, rivals, and Anthropic CEO Dario Amodei, who accused it of misleading claims in a leaked memo.

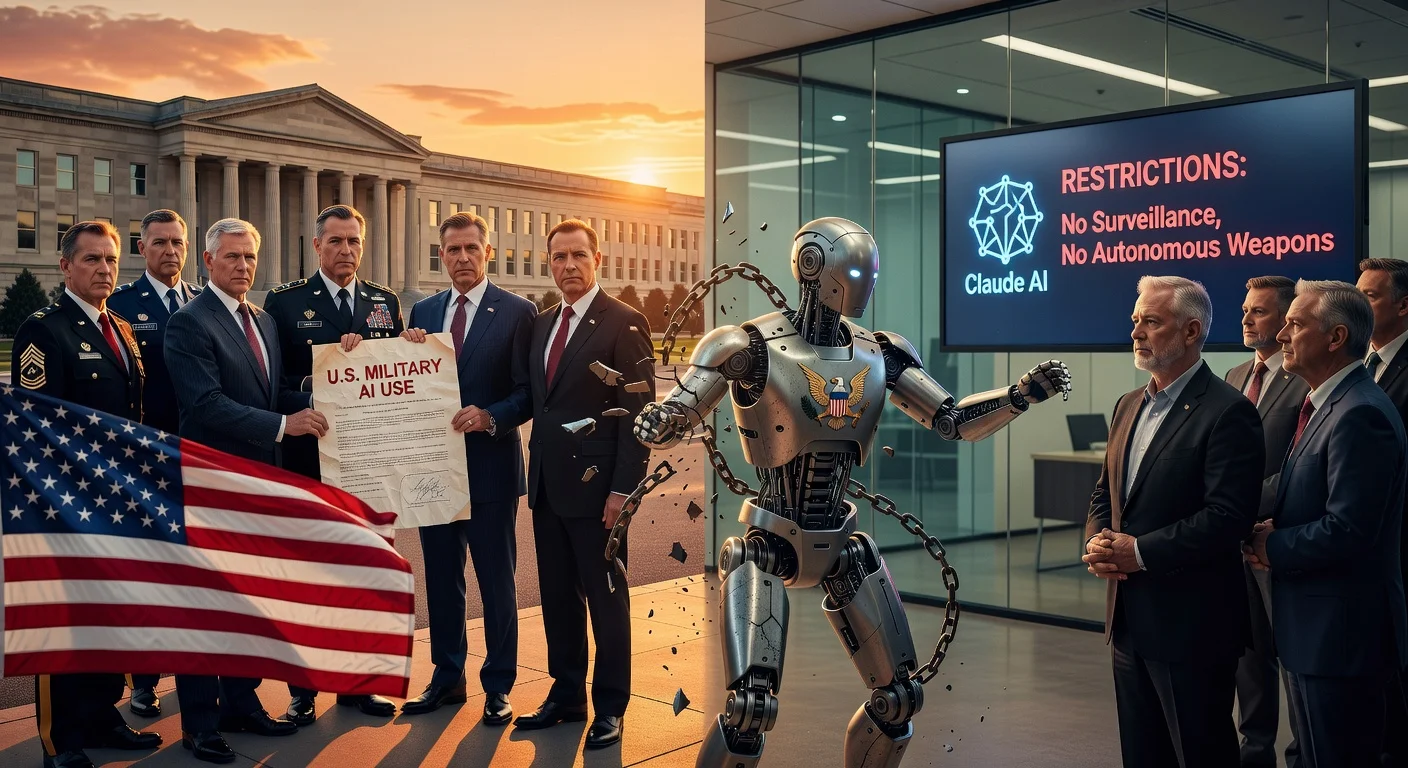

In a bid to avoid being labeled a supply chain risk—typically reserved for foreign adversaries—Anthropic is back in talks with the Pentagon, reports from the Financial Times and Bloomberg indicated on March 5, 2026. CEO Dario Amodei is negotiating with Under Secretary of Defense for Research and Engineering Emil Michael, after a prior $200 million contract from 2025 collapsed over language prohibiting mass surveillance.

Amodei detailed the breakdown in a memo to staff: The department offered to honor Anthropic's terms if it removed a clause on 'analysis of bulk acquired data'—precisely the surveillance scenario Anthropic sought to block. Anthropic refused, prompting the Pentagon to threaten cancellation and the risk label. President Trump then ordered federal agencies to cease using Anthropic technology on February 28, though a six-month phase-out permitted continued access, including for planning an air strike on Iran.

Amodei lambasted OpenAI's response as 'just straight up lies' in the memo, attributing some of Anthropic's troubles to lacking 'dictator-style praise to Trump,' unlike OpenAI CEO Sam Altman. OpenAI secured its own Defense Department deal shortly after Anthropic's fallout, with Altman claiming on X he advised against the risk designation and suggesting Anthropic should have accepted similar terms. OpenAI later amended its agreement to bar mass surveillance on Americans.

OpenAI staff criticized the deal in an all-hands meeting, pressing Altman for details; he acknowledged internal sloppiness on social media. Previously, OpenAI prohibited military use but allowed Pentagon testing via Microsoft. The controversy boosted Anthropic's Claude to the top of Apple's free apps chart.

Part of the Anthropic–Pentagon AI contract dispute series.