After Anthropic CEO Dario Amodei said in late February that the company would not allow its Claude model to be used for mass domestic surveillance or fully autonomous weapons, senior Pentagon officials said they have no intention of using AI for domestic surveillance and insist that private firms cannot set binding limits on how the U.S. military employs AI tools.

Last July, the Pentagon’s chief digital and artificial intelligence officer, Doug Matty, announced contract awards of up to $200 million each to four tech companies—Anthropic, Google, OpenAI, and xAI—to provide advanced AI models for Defense Department missions. Matty said the department intended to speed adoption of commercial AI for “Joint mission essential tasks” in the “warfighting domain,” but the Pentagon released few operational details, citing national security.

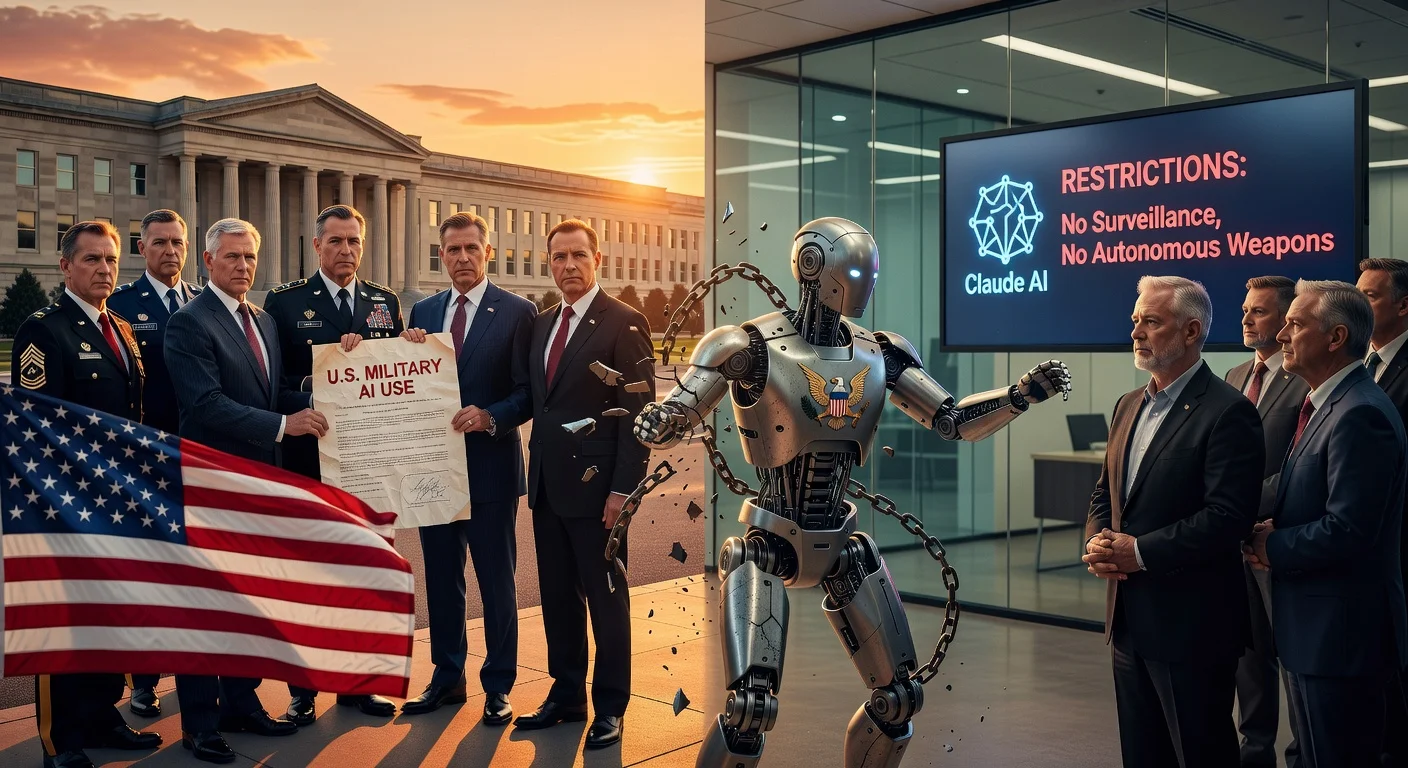

The relatively opaque awards drew fresh attention at the end of February, when Anthropic said it was insisting on limits for Claude in a “narrow set of cases.” In a Feb. 26 statement, Amodei said he strongly supported using AI to help defend the United States and other democracies, but argued that some applications could undermine democratic values—including “mass domestic surveillance” and “fully autonomous weapons,” which he described as self-guided combat drones.

Senior Defense Department officials responded by pushing back on both the premise and the company’s leverage. According to reporting cited by The Nation, Pentagon officials said they do not intend to use AI for domestic surveillance and that unmanned weapons systems will remain under human oversight. But they also argued that contractors should not be able to impose their own civil-liberties conditions on Pentagon operations. Emil Michael, the undersecretary of defense for research and engineering, was quoted as saying: “We won’t have any BigTech company decide Americans’ civil liberties.”

The Nation reported that, during negotiations, Michael also raised a separate question about whether Anthropic would oppose the use of Claude in nuclear-related missions such as missile defense, and that Amodei did not object to that use.

The dispute has highlighted a broader tension between the Pentagon’s push to integrate generative AI into intelligence, targeting and weapons development—and the guardrails AI companies say they need to prevent misuse. The Nation pointed to longstanding Defense Department efforts such as Project Maven, which began by using AI to help analyze drone video for potential targets, and DARPA’s Collaborative Operations in Denied Environment (CODE) initiative, which has worked on autonomy for groups of drones operating under preset rules.

Official Pentagon policy on autonomy is outlined in DoD Directive 3000.09, which states that autonomous and semi-autonomous weapons should be designed so commanders and operators can exercise “appropriate levels of human judgment over the use of force.” Critics have argued that the policy’s flexibility still leaves room for autonomy that could significantly reduce real-time human control.

As AI becomes more integrated into military planning and operations, the Anthropic-Pentagon standoff underscores an unresolved question at the center of the U.S. military’s AI expansion: how to reconcile rapid adoption of commercial systems with demands for enforceable limits on domestic surveillance and the delegation of lethal force to machines.