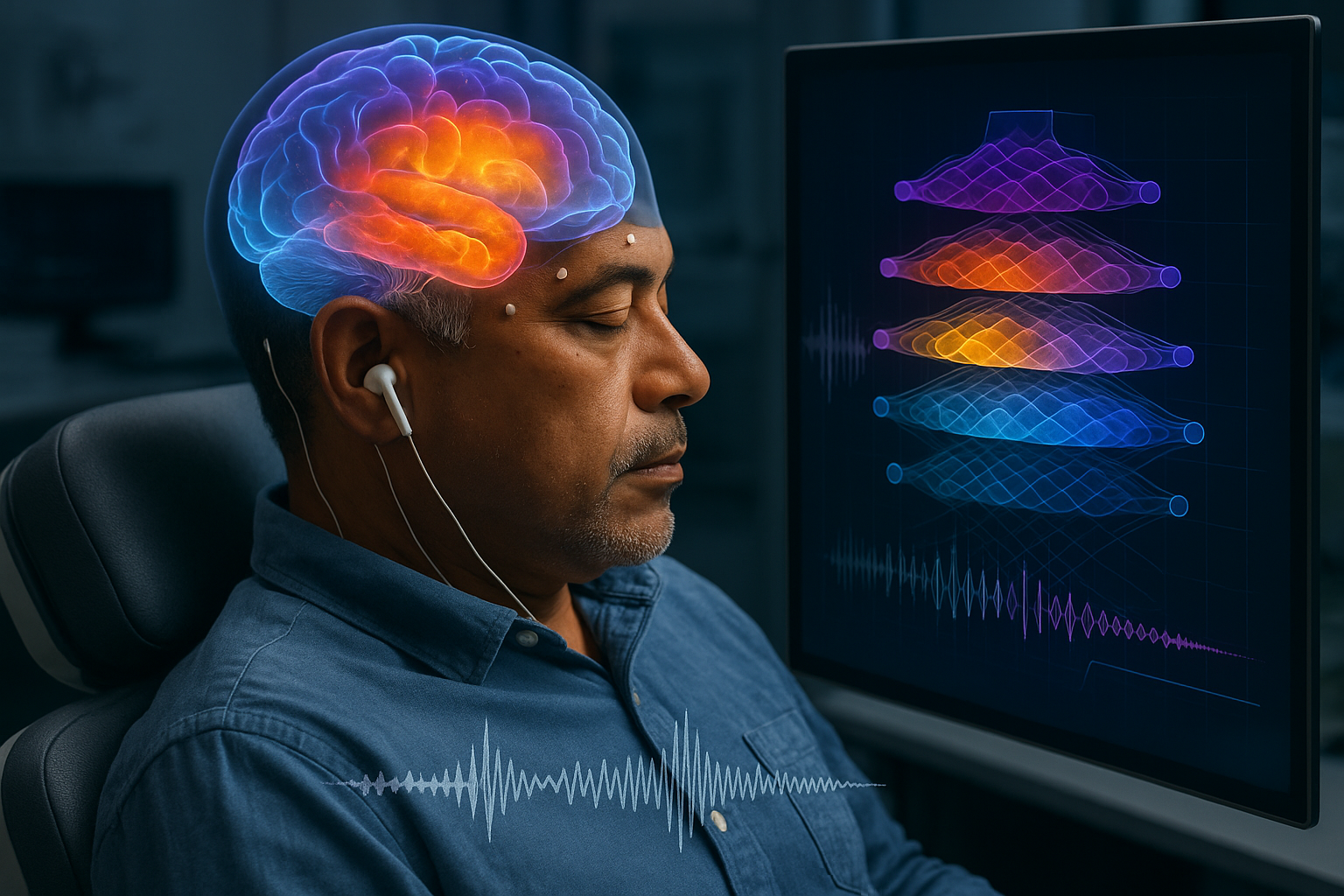

A new study reports that as people listen to a spoken story, neural activity in key language regions unfolds over time in a way that mirrors the layer-by-layer computations inside large language models. The researchers, who analyzed electrocorticography recordings from epilepsy patients during a 30-minute podcast, also released an open dataset intended to help other scientists test competing theories of how meaning is built in the brain.

Scientists have reported evidence that the brain’s processing of spoken language unfolds in a sequence that resembles the layered operations of modern large language models.

The research, published in Nature Communications on Nov. 26, 2025, was led by Dr. Ariel Goldstein of the Hebrew University of Jerusalem, with collaborators including Dr. Mariano Schain of Google Research and Prof. Uri Hasson and Eric Ham of Princeton University.

Listening experiment and neural recordings

The team analyzed electrocorticography (ECoG) recordings from nine epilepsy patients as they listened to a 30-minute audio podcast, “Monkey in the Middle” (NPR, 2017). The researchers modeled neural responses to each word in the story using contextual embeddings drawn from multiple hidden layers of the GPT2-XL model and from Llama 2.

They focused on several regions along a ventral language-processing pathway, including areas in the superior temporal gyrus, the inferior frontal gyrus (which includes Broca’s area), and the temporal pole.

A layered time course of meaning

The study reports that brain responses matched the models’ internal representations in a time-ordered pattern: earlier neural signals aligned more strongly with earlier model layers, while later neural activity corresponded more closely to deeper layers that integrate broader context. The association was described as particularly strong in higher-level language regions such as Broca’s area.

“What surprised us most was how closely the brain’s temporal unfolding of meaning matches the sequence of transformations inside large language models,” Goldstein said, according to a summary released by the Hebrew University of Jerusalem.

Implications and data release

The findings are presented as a challenge to strictly rule-based accounts of language comprehension, suggesting instead that context-sensitive, statistical representations may explain real-time neural activity more effectively than traditional linguistic units such as phonemes and morphemes.

The researchers also released a public dataset intended to support further work in language neuroscience, including neural recordings aligned with linguistic features.

Separate from the Nature Communications report, a related data descriptor in the journal Scientific Data describes a “Podcast” ECoG dataset from nine participants with 1,330 electrodes listening to the same 30-minute stimulus, along with extracted features ranging from phonetic information to large language model embeddings and accompanying tutorials for analysis.