A new model from linguists Richard Futrell and Michael Hahn suggests that many hallmark features of human language—such as familiar words, predictable ordering and meaning built up step by step—reflect constraints on sequential information processing rather than a drive for maximum data compression. The work was published in Nature Human Behaviour.

Human language is remarkably rich and intricate. From an information-theory standpoint, the same ideas could, in principle, be transmitted in far more compact strings—similar to how computers represent information using binary digits.

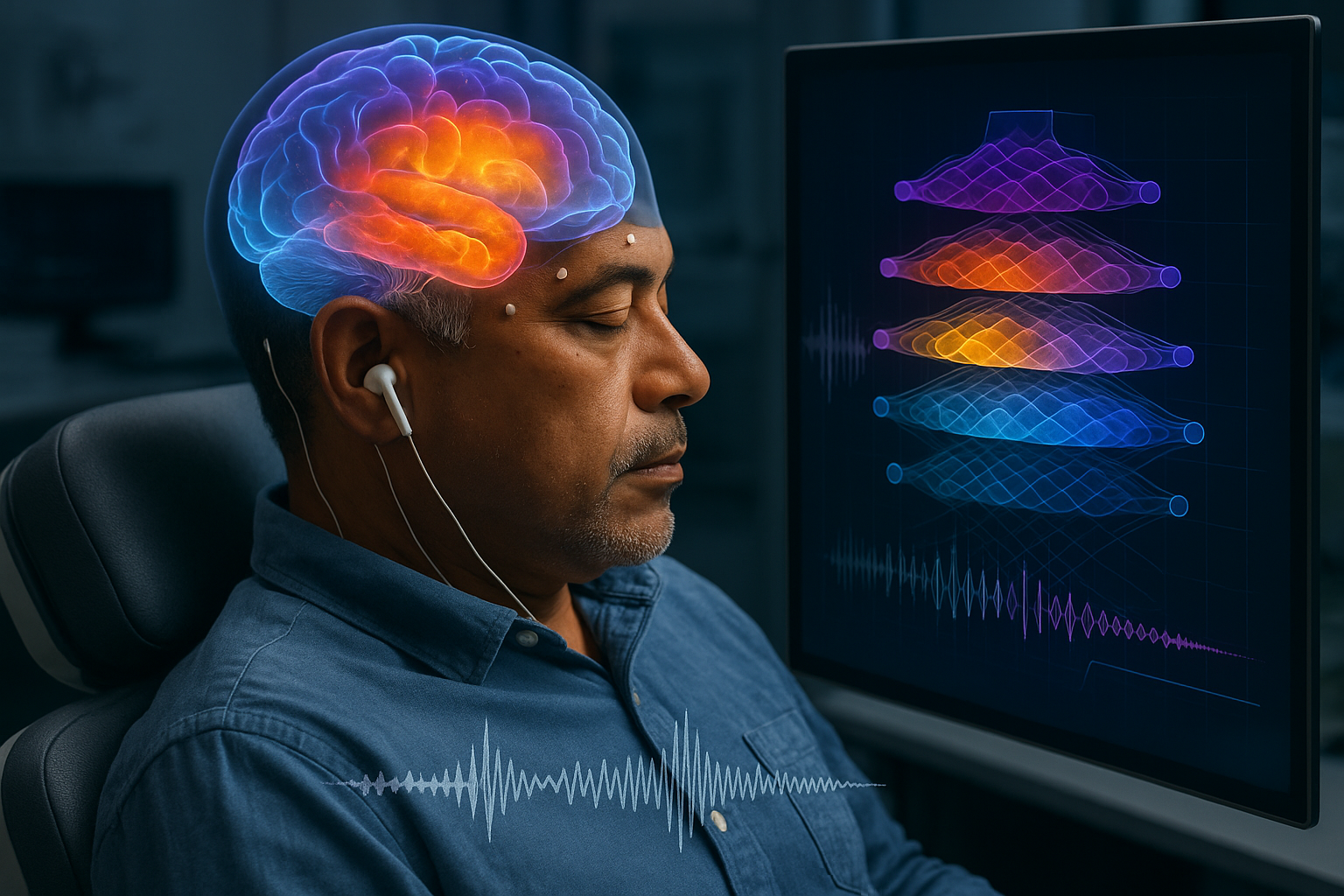

Michael Hahn, a linguist at Saarland University in Saarbrücken, Germany, and Richard Futrell of the University of California, Irvine, set out to address why everyday speech does not resemble a tightly compressed digital code. In a paper published in Nature Human Behaviour in November 2025, the researchers present a model in which “natural-language-like” structure arises when communication is constrained by limits on sequential prediction—how much information must be carried forward from what has already been heard to anticipate what comes next.

In that framework, language benefits from patterns that are easy for people to process as a stream. A ScienceDaily summary of the work, citing materials from the University of Osaka, uses examples to illustrate the idea: an invented word such as “gol” for a hybrid concept (half cat and half dog) would be hard to understand because it does not map cleanly onto shared experience, and a scrambled blend like “gadcot” is similarly difficult to interpret. By contrast, “cat and dog” is immediately meaningful.

The researchers also point to word order as a signal that helps listeners reduce uncertainty in real time. The ScienceDaily release highlights the German noun phrase “Die fünf grünen Autos” (“the five green cars”) as an example of how meaning can be built incrementally as each word narrows the set of plausible interpretations. Reordering those words—for example, “Grünen fünf die Autos”—disrupts that predictability and makes comprehension harder.

Beyond explaining why language is not “maximally compressed,” the paper’s discussion connects the findings to machine learning. Futrell and Hahn argue that natural language is structured in a way that makes next-token prediction comparatively easier under cognitive constraints, a point they say is relevant to modern large language models.