In the latest development of the Anthropic supply chain risk controversy, a U.S. federal appeals court on April 9 denied Anthropic's emergency motion to block the Trump administration's blacklisting of its AI technology. The court expedited oral arguments for May 19 but ruled the balance of equities favors the government, marking a setback following a prior district court injunction.

The U.S. Court of Appeals for the District of Columbia Circuit refused to halt the Trump administration's designation of Anthropic as a national security supply-chain risk. A panel of three Republican-appointed judges, including Trump appointees Gregory Katsas and Neomi Rao, recognized potential irreparable harm to Anthropic—such as financial losses and claims of retaliation for First Amendment-protected speech—but found insufficient evidence of chilled speech and prioritized government equities amid military conflict.

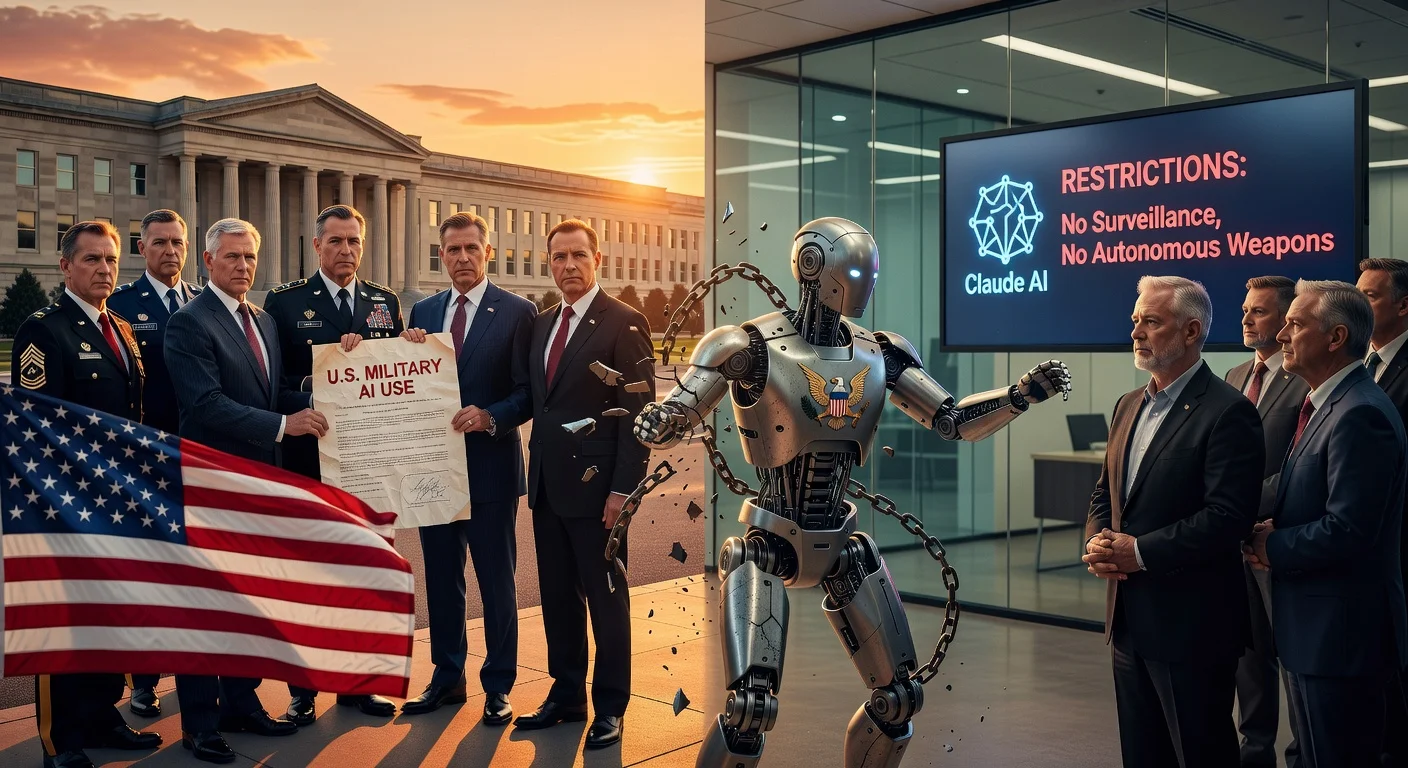

The blacklist stems from Anthropic's refusal to allow its Claude AI models for autonomous warfare and mass surveillance of Americans. President Trump directed federal agencies to stop using the technology, and Defense Secretary Pete Hegseth barred military contractors from dealings with the firm. Acting Attorney General Todd Blanche called the ruling a 'resounding victory for military readiness,' emphasizing presidential authority over the Department of War (formerly Defense).

This follows U.S. District Judge Rita Lin's March 27 preliminary injunction in California, which blocked the initial March 4 designation as arbitrary and First Amendment retaliation; the administration is appealing to the 9th Circuit. Anthropic expressed confidence in future court rulings deeming the blacklist unlawful and reiterated its commitment to safe AI. The Computer & Communications Industry Association warned that procedural lapses in such designations could harm U.S. innovation.

Part of the 'Anthropic supply chain risk controversy' series.