Researchers at the University of Birmingham used facial motion capture to compare how autistic and non-autistic adults produce facial expressions of anger, happiness and sadness, finding consistent differences in which facial features were emphasized. The work, published in *Autism Research*, suggests some misunderstandings about emotion may stem from mismatched expressive “styles” across groups rather than a one-sided problem.

A study led by researchers at the University of Birmingham has detailed how autistic and non-autistic adults move their faces when expressing basic emotions, identifying differences that could contribute to miscommunication.

Published in Autism Research, the study recorded facial motion capture data from 25 autistic adults and 26 non-autistic adults. Participants produced 4,896 expressions in total—2,448 “cued” expressions and 2,448 spoken expressions—while displaying anger, happiness and sadness across two contexts: matching facial movements to sounds and speaking. Researchers reported extracting more than 265 million data points to build a high-resolution library of facial movements.

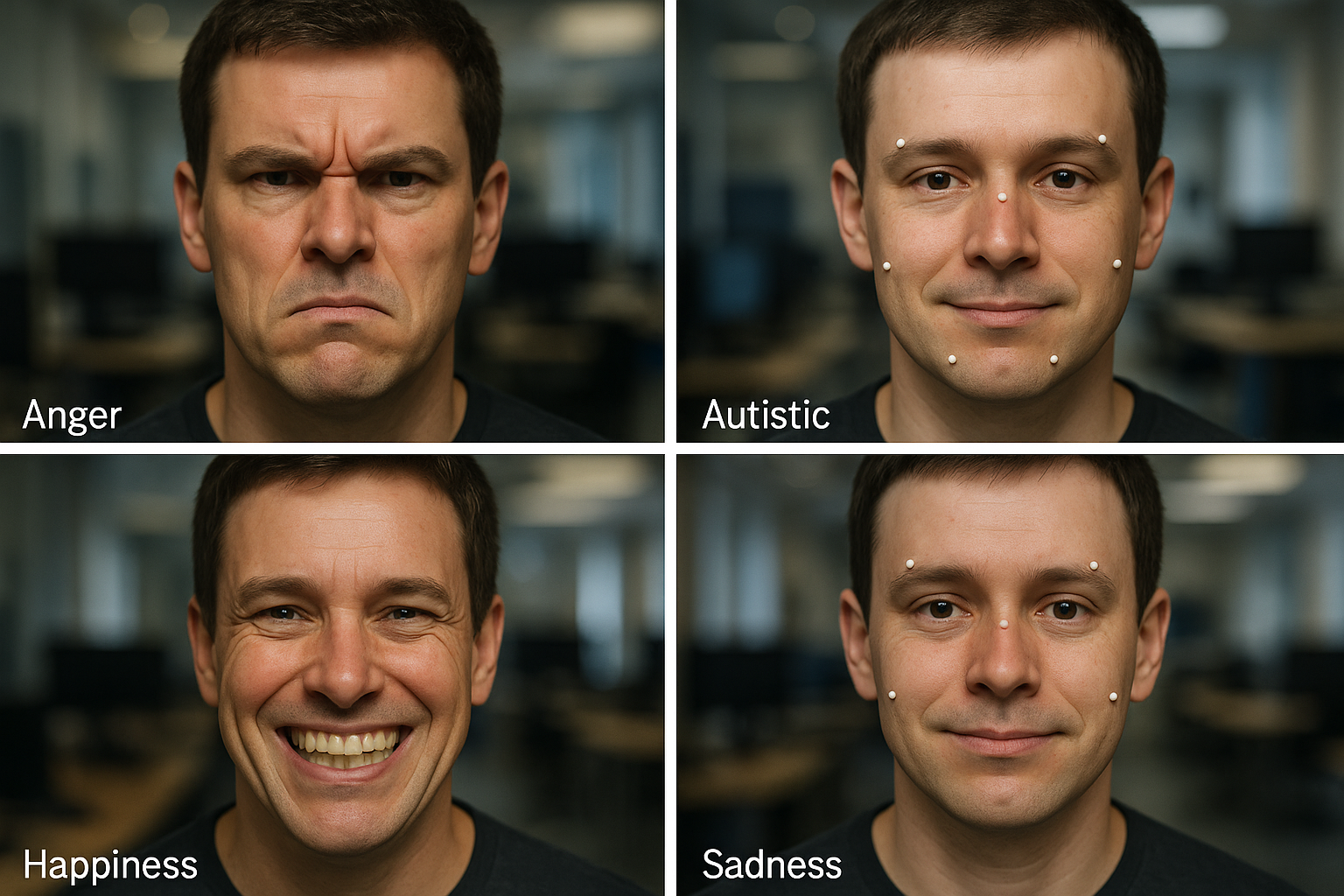

The analysis found emotion-specific differences in how expressions were produced. For anger, autistic participants relied more on the mouth and less on the eyebrows than non-autistic participants. For happiness, autistic participants showed a less exaggerated smile that did not “reach the eyes.” For sadness, autistic participants more often produced a downturned look by raising the upper lip more than their non-autistic peers. The research team also reported that autistic participants produced a wider range of unique expressions.

The study additionally examined alexithymia—often described as difficulty identifying and describing one’s own emotions—and found that higher alexithymia was associated with less clearly differentiated facial expressions for anger and happiness, which could make those emotions appear more ambiguous.

Dr. Connor Keating, who led the work at the University of Birmingham and is now based at the University of Oxford, said the differences extended beyond the “shape” of expressions to how they unfold over time: “Our findings suggest autistic and non-autistic people differ not only in the appearance of facial expressions, but also in how smoothly these expressions are formed. These mismatches in facial expressions may help to explain why autistic people struggle to recognize non-autistic expressions and vice versa.”

Professor Jennifer Cook, the senior author at the University of Birmingham, said the results support a view of emotional expression differences as potentially reciprocal rather than inherently deficient: “Autistic and non-autistic people may express emotions in ways that are different but equally meaningful—almost like speaking different languages. What has sometimes been interpreted as difficulties for autistic people might instead reflect a two-way challenge in understanding each other’s expressions.”

According to the University of Birmingham, the project was funded by the UK Medical Research Council and the European Union’s Horizon 2020 Research and Innovation Programme. The paper is titled “Mismatching Expressions: Spatiotemporal and Kinematic Differences in Autistic and Non-Autistic Facial Expressions” (DOI: 10.1002/aur.70157).