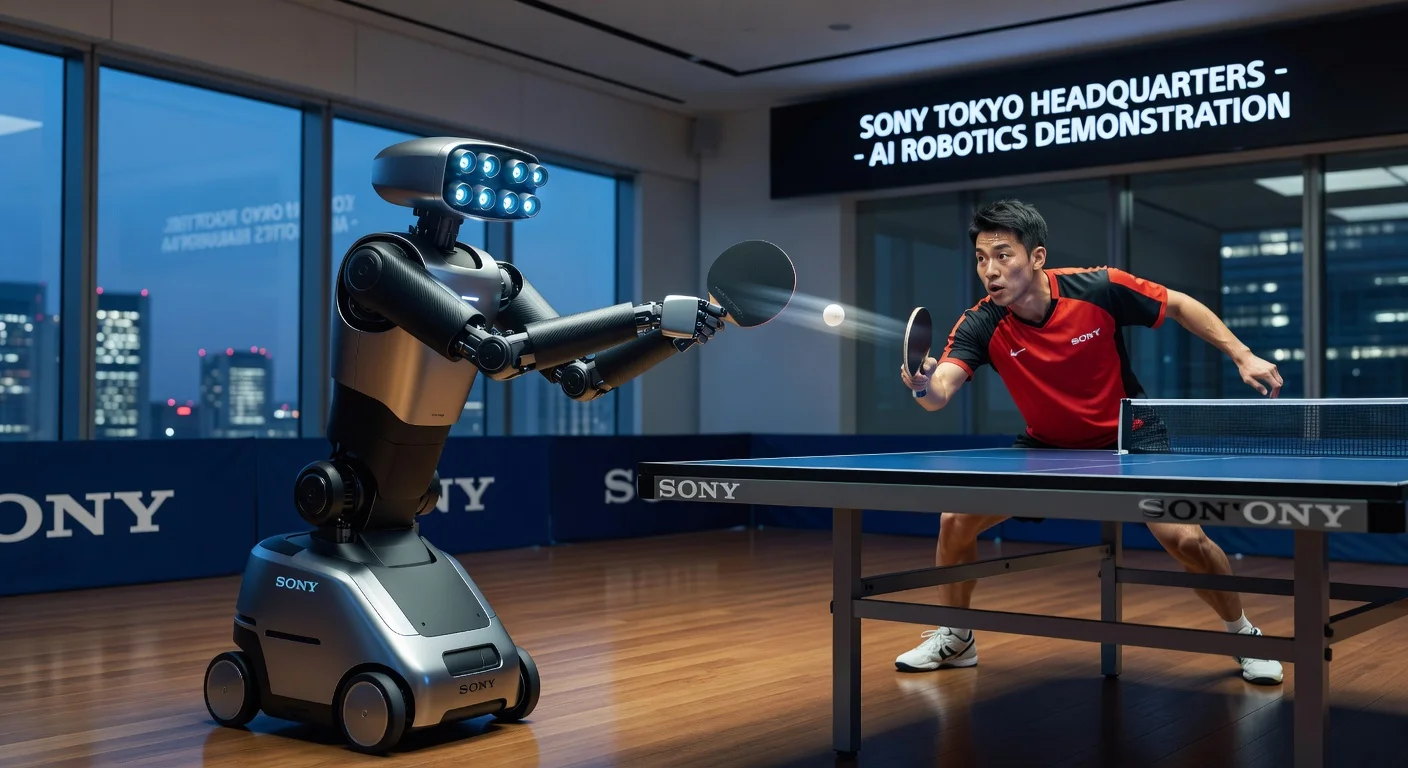

Sony AI's table tennis robot Ace has challenged and sometimes defeated professional human players at an expert level. A study published Wednesday in Nature details how it learned via reinforcement learning and performed on an Olympic-sized court at Sony's Tokyo headquarters. The robot uses nine camera eyes to track the ball's spin by its logo.

Sony AI researchers developed the table tennis robot Ace, which performed at a human expert level against professionals. Peter Dürr, a Sony AI researcher and co-author of the study, said, “There’s no way to program a robot by hand to play table tennis. You have to learn how to play from experience,” explaining its use of reinforcement learning.

An Olympic-sized table tennis court was built at Sony's headquarters in Tokyo, with two umpires from the Japanese Table Tennis Association officiating. Professional players Minami Ando and Kakeru Sone competed against it, and Ace secured some victories. In December, it defeated all but one of four high-skill players.

Michael Spranger, president of Sony AI, noted, “Speed is really one of the fundamental issues in robotics today, especially in scenarios or environments that are not fixed.” The robot's speed, arm reach, and performance were made comparable to a skilled athlete training 20 hours a week, playing by official rules. “The goal is to have some level of comparability... and win really at the level of AI and the level of decision-making and tactics,” he said.

Olympian Kinjiro Nakamura, who competed in the 1992 Barcelona Games, observed a shot by Ace and remarked, “No one else would have been able to do that... means that there is a possibility that a human could do it too.” Sony calls it “the first time a robot has achieved human, expert-level play in a commonly played competitive sport in the physical world.”

The technology could apply to manufacturing and other industries. John Billingsley, a retired professor, praised it, saying progress comes from contests.