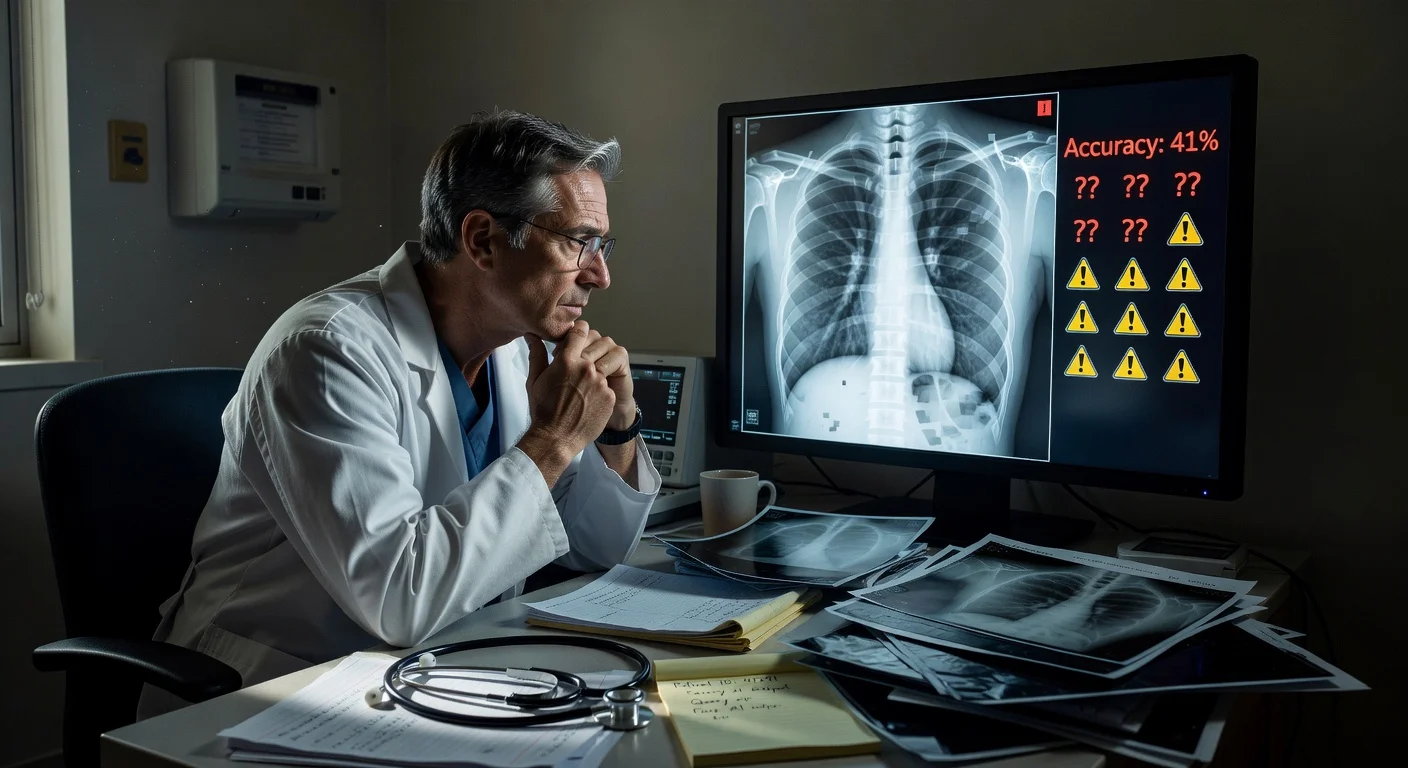

A study published March 24, 2026 in *Radiology* reports that AI-generated “deepfake” X-rays can be convincing enough to mislead radiologists and several multimodal AI systems. In testing, radiologists’ average accuracy rose from 41% when they were not told fakes were included to 75% when they were warned, highlighting potential risks for medical imaging security and clinical decision-making.

Radiologists from 12 institutions across six countries—the United States, France, Germany, Turkey, the United Kingdom and the United Arab Emirates—took part in image-review tests described in a study published March 24, 2026 in Radiology, the journal of the Radiological Society of North America.

The study included 17 radiologists with experience ranging from beginners to physicians with up to 40 years in practice. They evaluated 264 X-ray images split evenly between real scans and AI-generated images.

Participants reviewed two image sets with no overlap. One set mixed real images with AI-generated radiographs made using ChatGPT and included X-rays from different parts of the body. The second set focused on chest X-rays, with half real and half generated using RoentGen, an open-source diffusion model developed by Stanford Medicine researchers.

When radiologists were not told that fake images were included, they recognized the AI-generated X-rays 41% of the time. After they were informed synthetic images were present, their average accuracy in distinguishing real from fake rose to 75%.

Performance varied widely across individuals. Radiologists correctly identified between 58% and 92% of the ChatGPT-generated images. For RoentGen-generated chest X-rays, radiologists’ accuracy ranged from 62% to 78%.

The researchers also tested several multimodal large language models on the same task. Four systems—GPT-4o and GPT-5 from OpenAI, Gemini 2.5 Pro from Google, and Llama 4 Maverick from Meta—achieved accuracy rates ranging from 57% to 85%. Even ChatGPT-4o, which was used to generate some of the deepfake images, did not detect all of them, though it performed better than the other models.

The study found no link between years of radiology experience and the ability to identify fake X-rays, but reported that musculoskeletal radiologists performed significantly better than other subspecialists.

Lead author Mickael Tordjman, M.D., a post-doctoral fellow at the Icahn School of Medicine at Mount Sinai in New York, said the results point to both legal and cybersecurity vulnerabilities. “This creates a high-stakes vulnerability for fraudulent litigation if, for example, a fabricated fracture could be indistinguishable from a real one,” he said, adding that there is “a significant cybersecurity risk if hackers were to gain access to a hospital’s network and inject synthetic images to manipulate patient diagnoses or cause widespread clinical chaos by undermining the fundamental reliability of the digital medical record.”

Tordjman also described visual patterns that may appear in synthetic images, saying deepfake medical images can look “too perfect,” with overly smooth bones, unnaturally straight spines, overly symmetrical lungs, excessively uniform blood vessel patterns and unusually clean-looking fractures.

To reduce the risk of tampering and misattribution, the researchers recommended safeguards including invisible watermarks embedded directly into images and cryptographic signatures linked to the imaging technologist at the time of image capture. They also said they released a curated deepfake dataset with interactive quizzes intended for training and awareness.

“We are potentially only seeing the tip of the iceberg,” Tordjman said, arguing that AI-generated 3D images such as CT and MRI could be the next step and that detection tools and educational resources should be developed early.